Fit-for-Purpose Real-World Data Assessments in Oncology: A Call for Cross-Stakeholder Collaboration

Kaushal D. Desai, MS, PhD, Sheenu Chandwani, MPH, PhD, Boshu Ru, PhD, Center for Observational and Real-World Evidence, Merck & Co, Inc, Kenilworth, NJ, USA; Matthew W. Reynolds, PhD, Jennifer B. Christian, PharmD, MPH, PhD, Real World Solutions, IQVIA, Rockville, MD, USA; Hossein Estiri, PhD, Harvard Medical School, Boston, MA, USA

Real-world evidence (RWE) remains a promising frontier in evidence generation to support improved health-related patient outcomes. It is defined as evidence derived from analyses of real-world data (RWD) (ie, other than data from controlled clinical trials).1 RWE thus draws on the complex and diverse landscape of data from medical claims, electronic medical records (EMRs), genomic records, and disease registries, among others. These data sources provide a rich source of information for health economics and outcomes research (HEOR). Some of the potential “use cases” of RWD for HEOR include, for example, determining disease burden and unmet healthcare needs, understanding the standard of care in real-world settings, developing realistic trial designs, studying patient-reported outcomes, and developing cost-effectiveness and budget impact models.

Fit-for-Purpose RWD: The Need for Consensus on Use Case Definitions

Regulatory and payer guidelines have highlighted the importance of “fitness for use,” also known as “fitness for purpose,” as a key factor that drives the choice of RWD and analytic methods for RWE generation.1,2 Multiple terminologies have been proposed to define data quality assessment,3 methods for determining RWD fitness for use,4 and frameworks for optimizing use of RWE in drug coverage decisions.5 However, the meaning of fitness for use remains undefined for the full spectrum of RWD use cases in HEOR. There remains a need for clearly outlined “use-case specifications,” broadly defined as specifications of RWD requirements and criteria to evaluate RWD fitness for use for specific RWE use cases.

The heterogeneity of data types across different sources of data—and the fragmented data standards among different healthcare institutions and software programs—continue to pose challenges for researchers working with RWD. Indeed, ISPOR’s 2021 Science Strategy identifies as its first goal listed under its first theme, Real-World Evidence, as “develop[ing] criteria for evaluating the research readiness of real-world databases for HEOR purposes.”6

Many entities own and potentially can sell access to (commercialize) real-world databases. Currently, databases available for purchase include those from payers (eg, Optum), EMR software providers (eg, McKesson and Flatiron Health), as well as companies that aggregate and link data from multiple sources (eg, IQVIA). Academic institutions and private entities with access to in-house data also may own their data and collaborate with outside researchers (eg, Kaiser Permanente Center for Health Research). A key challenge in working with real-world datasets is to determine which databases are appropriate for purchase in order to support specific research needs. In our experience, little information is available regarding fitness for use of most commercially available datasets.

Combining Relevance and Quality Assessments for RWD Selection: The UReQA Framework

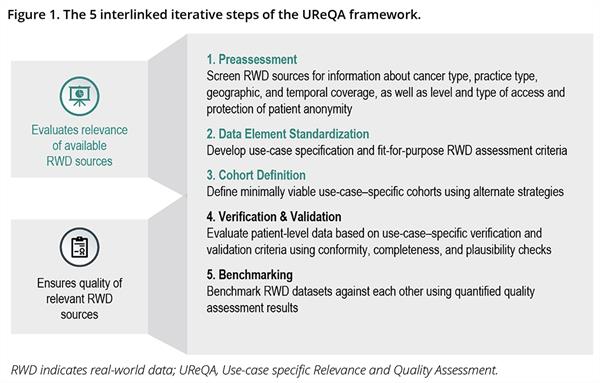

We have developed and refined a real-world database assessment tool named the Use-case specific Relevance and Quality Assessment (UReQA) framework, described in our poster presented at Virtual ISPOR 2021.7 The framework was developed for evaluation of commercial database offerings in the United States and is based on learnings from quality assessments to support retrospective outcomes studies in oncology. Our aim was to combine the “relevance” and “quality” dimensions of RWD assessment into a single framework to inform the choice of fit-for-purpose RWD to address specific scientific questions. The UReQA framework consists of 5 connected, iterative steps, beginning with:

(1) a preassessment step using a standard questionnaire for database providers to present high-level information about oncology-specific characteristics of the database, such as cancer type, practice type, and geographic and temporal coverage (Figure 1).7

Figure 1. The 5 interlinked iterative steps of the UReQA framework.

For databases determined by preassessment to meet use case requirements, the subsequent steps in the framework are then applied to assess the database relevance

and quality in more detail.

The next step in the framework is:

(2) data element standardization, which entails developing use-case specification and assessment criteria appropriate for the research plan. The core components of a use-case specification include the following:

(a) A list of key data elements needed for the study

(b) For each key data element, the variable definitions, constraints, and formats (collectively termed “business rules”)

(c) A list of quality checks that establish internal and external validity for key data elements, and

(d) Clearly identified, use-case–specific quality thresholds for validation and benchmarking

The subsequent steps in the framework are applied in interlinked and iterative fashion as follows:

(3) Use of alternative strategies to define use-case–specific study cohorts

(4) Verification and validation of patient-level data against the list of prespecified quality checks, and

(5) Benchmarking real-world datasets for fit-for-purpose use in context of specific use cases

A use-case specification, therefore, outlines a blueprint for fit-for-purpose evaluation of RWD and may form the basis for agreement on quality assessment and reporting requirements across all relevant stakeholders.

An Example of UReQA Framework Application: Real-World Time-to-Treatment Discontinuation

We illustrate the application of UReQA framework steps 2 through 5 by evaluating 2 datasets comprising anonymized EMR data of patients with advanced cancer, cohorts A and B, for estimating an established surrogate effectiveness endpoint for real-world oncology studies: namely, real-world time-to-treatment discontinuation (rwTTD), defined as the time from the start of a systemic anticancer therapy to the time of discontinuation of that therapy for any reason, including death.7-9 The TTD for continuously administered anticancer medications in randomized controlled trials has been associated with overall survival and with the length of time from drug initiation to disease progression or death. It is calculated as the [(date of last recorded dose – date of first recorded dose) + 1 day] for the agent or regimen of interest within a specific setting or line of therapy.

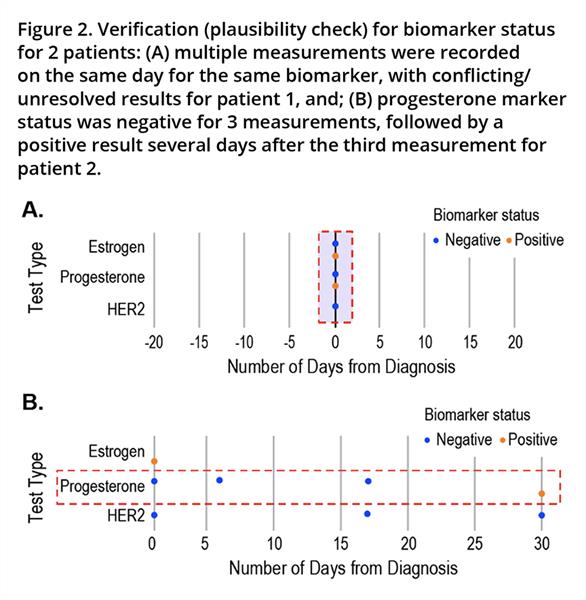

Key data elements required to estimate rwTTD include records of anticancer treatments administered, clinical visit dates, and mortality, in addition to biomarker testing results to define cancer type. The definitions of cohorts A and B involved stratification using biomarker test results, which in turn required unambiguous determination of biomarker status at specific timepoints with regard to rwTTD estimation. We plotted temporal trends in biomarker testing results as a key data element required for use-case specification and, as a verification check, discovered co-occurring (on the same day) and conflicting/unresolved biomarker results, illustrated for 2 patients in Figure 2.7

Figure 2. Verification (plausibility check) for biomarker status for 2 patients: (A) multiple measurements were recorded on the same day for the same biomarker, with conflicting/

unresolved results for patient 1, and; (B) progesterone marker status was negative for 3 measurements, followed by a positive result several days after the third measurement for patient 2.

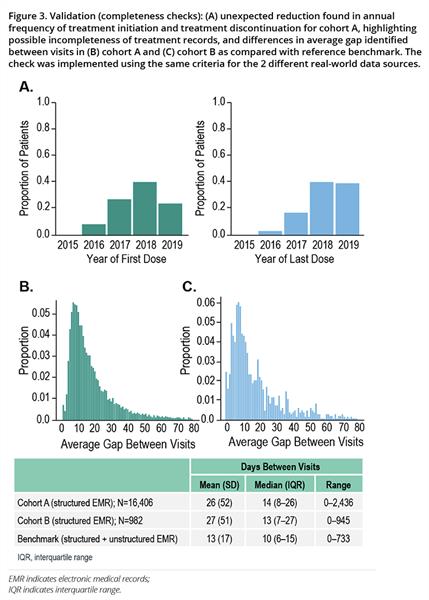

Validation checks for data completeness found an unexpected reduction in annual frequency of treatment initiation and treatment discontinuation, highlighting possible incompleteness or discrepancy among treatment records (Figure 3A), in addition to differences in the average gap identified between visits as compared with a reference benchmark (Figures 3B, 3C).

Figure 3. Validation (completeness checks): (A) unexpected reduction found in annual frequency of treatment initiation and treatment discontinuation for cohort A, highlighting possible incompleteness of treatment records, and differences in average gap identified between visits in (B) cohort A and (C) cohort B as compared with reference benchmark.The check was implemented using the same criteria for the 2 different real-world data sources.

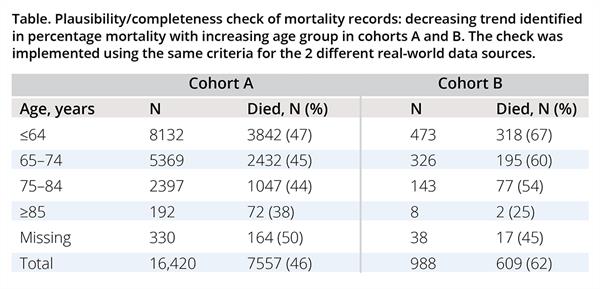

A further validation check examining the completeness of mortality event records identified a decreasing trend in percentage mortality with increasing age group (Table).7 Thus, in our example, fit-for-purpose RWD assessment revealed important insights into the nature of the 2 RWD sources, with varied levels of potential impact on estimation of the rwTTD use case.

Table. Plausibility/completeness check of mortality records: decreasing trend identified in percentage mortality with increasing age group in cohorts A and B. The check was implemented using the same criteria for the 2 different real-world data sources.

A Call for Cross-Stakeholder Collaboration

We believe that a cross-stakeholder collaboration is required to arrive at a shared definition of use-case specifications, including relevant quality thresholds and identification of benchmarking resources for validation strategies. Iterative evolution of use-case–specific requirements, training for increased awareness of application methods, standardization of fit-for-purpose quality reporting, and transparency of findings are foundational capabilities to build a robust and reliable RWD ecosystem.

A shared definition of fitness for purpose will benefit all stakeholders. For health technology assessment (HTA) and regulatory decision makers, a shared definition would outline expectations in the form of reliability and quality requirements associated with specific uses of RWD. For sponsors and pharma, a shared definition of fitness for purpose would enable proactive mapping between available RWD and specific use cases, leading to more efficient identification of data gaps and improved engagement of data providers. For data providers, a well-defined use-case specification could drive the data extraction and curation pipeline as well as inform validation strategies for automated components of the data delivery pipeline. For physicians and patients, a well-defined specification for secondary use of healthcare data may help prioritize specific key data elements for EMR implementation, yield reliable RWE for clinical decision making, and minimize inefficiencies resulting from less-than-optimal evidence generation in support of patient care.

Concluding Thoughts

Ongoing efforts related to RWD transparency,10 terminologies, and protocols to assess data,3,4 as well as reporting templates (eg, STaRT-RWE11), are likely to require an agreement on fitness-for-use requirements and quality assessment criteria for RWD among relevant stakeholders. A relevance assessment framework is likely to drive benefits for all stakeholders involved in the specification development and maintenance effort.

References

1. United States Food & Drug Administration. Framework for FDA’s Real-World Evidence Program. Published 2018. https://www.fda.gov/media/120060/download. Accessed May 14, 2021.

2. Hampson G, Towse A, Dreitlein WB, Henshall C, Pearson SD. Real-world evidence for coverage decisions: opportunities and challenges. J Comp Eff Res. 2018;7(12):1133-1143.

3. Kahn MG, Callahan TJ, Barnard J, et al. A harmonized data quality assessment terminology and framework for the secondary use of electronic health record data. EGEMS (Wash DC). 2016;4(1):1244.

4. Mahendraratnam N, Silcox C, Mercon K, et al. Determining real-world data’s fitness for use and the role of reliability: Duke-Margolis Center for Health Policy. Published 2019. https://healthpolicy.duke.edu/publications/determining-real-world-datas-fitness-use-and-role-reliability. Accessed May 14, 2021.

5. Pearson SD, Dreitlein WB, Towse A, Hampson G, Henshall C. A framework to guide the optimal development and use of real-world evidence for drug coverage and formulary decisions. J Comp Eff Res. 2018;7(12):1145-1152.

6. ISPOR. Strategic Initiatives: Science Strategy. Published 2021. https://www.ispor.org/strategic-initiatives/science-strategy. Accessed May 14, 2021.

7. Desai KD, Chandwani S, Ru B, Reynolds MW, Christian JB, Estiri H. An oncology real-world data assessment framework for outcomes research. Poster presented at Virtual ISPOR 2021;17-20 May 2021.

8. Stewart M, Norden AD, Dreyer N, et al. An exploratory analysis of real-world end points for assessing outcomes among immunotherapy-treated patients with advanced non-small-cell lung cancer. JCO Clin Cancer Inform. 2019;3:1-15.

9. Blumenthal GM, Gong Y, Kehl K, et al. Analysis of time-to-treatment discontinuation of targeted therapy, immunotherapy, and chemotherapy in clinical trials of patients with non-small-cell lung cancer. Ann Oncol. 2019;30(5):830-838.

10. Orsini LS, Berger M, Crown W, et al. Improving transparency to build trust in real-world secondary data studies for hypothesis testing-why, what, and how: recommendations and a road map from the Real-World Evidence Transparency Initiative. Value Health. 2020;23(9):1128-1136.

11. Wang SV, Pinheiro S, Hua W, et al. STaRT-RWE: structured template for planning and reporting on the implementation of real world evidence studies. BMJ. 2021;372:m4856.

Explore Related HEOR by Topic