Metamodeling in Health Economics and Outcomes Research

Section Editor: Koen Degeling, PhD

What is Metamodeling?

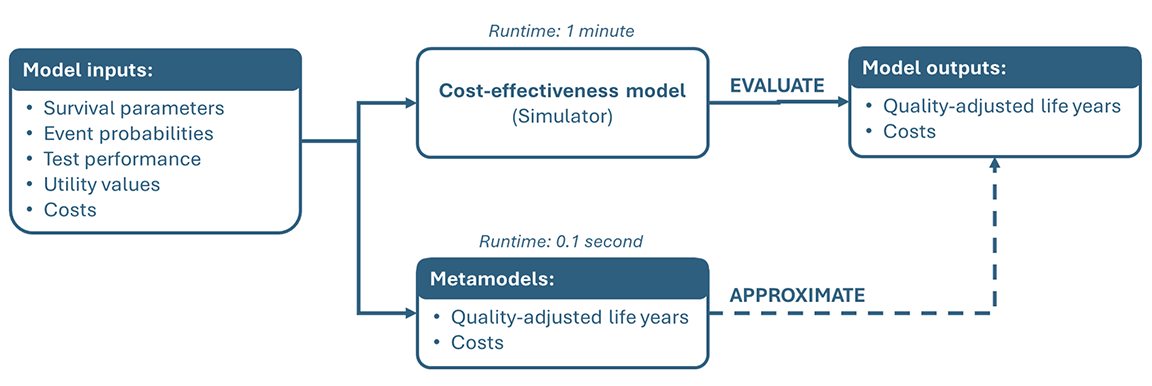

Metamodels are models of existing models and are also known as surrogate models or emulators. A metamodel approximates the outcomes that an existing target model or “simulator” would produce based on a set of input parameters (Figure 1). In doing so, the metamodel provides a fast approximation of a model that may be too computationally complex (ie, slow) to use itself.

Figure 1. Conceptual illustration of metamodeling based on Degeling et al.1 A computationally demanding target model maps input parameters (eg, clinical and economic parameters) to model outputs (eg, costs and quality-adjusted life years). A metamodel is fitted to input-output pairs generated from the target model and can subsequently be used to rapidly approximate model outcomes for new input combinations.

What Can It Be Used For?

In general, metamodeling is useful for 2 types of applications. The first is simulation modeling, where metamodels are used to reduce the computational burden of complex models or analyses. The second is statistical modeling, where metamodels are used to improve understanding of the target model by analyzing statistical relationships between model inputs and outputs.

In health economics and outcomes research (HEOR), the simulation objective is typically the most relevant and the focus of this article. In this context, metamodeling typically applies to cost‑effectiveness models and the analyses that are performed with them. The motivation to use metamodels herein is to reduce the time needed to perform analyses that are computationally demanding either because the model itself is complex (eg, when using an individual-level simulation) or because the analyses require a model to be evaluated a large number of times. Key use cases include performing probabilistic (scenario) analyses or value of information analyses, or applying calibration or optimization algorithms.

How Does It Work?

Metamodeling reduces computational burden by replacing the target model in analyses with rapid predictions from the metamodel. A wide range of techniques can be used to develop metamodels. In its simplest form, a metamodel can be a simple linear regression model fitted to the target model’s inputs and outputs. More flexible regression approaches, including splines or generalized additive models, can be used to capture smooth nonlinear relationships. When the relationship between inputs and outputs is highly complex, machine‑learning methods such as random forests, gradient boosting, or neural networks may be appropriate. Gaussian process emulators can also be used, particularly when training datasets are relatively small.

What Makes It Different From Other Modeling Methods?

Metamodeling is different from other modeling approaches commonly used in HEOR in that it requires an existing target model or “simulator” to be available. A metamodel cannot be developed in isolation, and its validity depends on the validity of the model it approximates.

Conceptually, the process of metamodeling resembles statistical modeling: A dataset is generated, a model is fitted, and predictive performance is assessed. The key difference is that, in metamodeling, the dataset is simulated from the target model rather than observed in real‑world data. These simulated data are generated under a controlled experimental design. As a result, they are not affected by measurement error or confounding, which are typical for empirical datasets. Therefore, metamodels can achieve very high predictive accuracy.

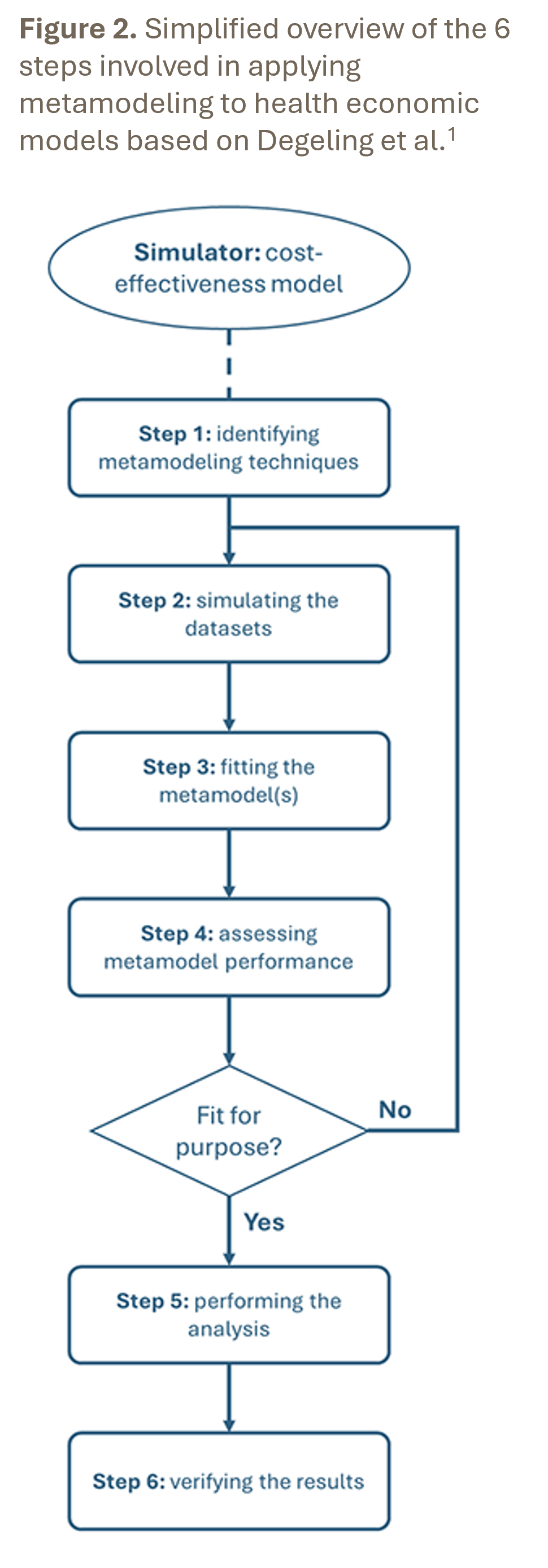

What Are The Steps in Applying Metamodeling?

A structured approach to metamodeling in HEOR is provided by the 6‑step process (Figure 2) described by Degeling et al.1 An essential prerequisite is the availability of a validated target model that is considered fit for purpose, because a metamodel can never compensate for shortcomings in the original model. Note that most techniques require a different metamodel to be developed for each outcome. Correlation between outcomes can be accounted for using multivariate techniques or by including certain outcomes as predictors for other outcomes in a step-wise approach.

Step 1 – Identify one or multiple suitable metamodeling techniques based on the types and number of input and output parameters.

Step 2 – Simulate datasets for training and testing the metamodel(s) using the existing target model based on defined parameter ranges, preferably using an efficient design of experiments.

Step 3 – Fit the metamodel using the chosen technique based on the simulated training dataset.

Step 4 – Assess metamodel(s) performance on the simulated testing dataset using metrics that are relevant for the intended decision problem.

Step 5 – Conduct the required analysis using the metamodel(s) to reduce the runtime.

Step 6 – Verify whether the results obtained with the metamodel(s) are consistent with the original target model.

There are also some areas where caution is needed when developing and using metamodels. First, metamodels make it easy to explore alternative scenarios, including scenarios beyond the evidence base for which the target model was developed and validated. This effectively constitutes a form of extrapolation that should be performed with caution. Second, the value of a metamodel depends on its validation, which should be performed with care. Computational efficiency should not come at the expense of confidence in the outcomes obtained.

In terms of software, metamodels in HEOR mostly have been developed in R, reflecting the availability of tools for design of experiments, regression and machine learning, and advanced analyses such as value of information analysis. However, metamodels can also be developed in R when the target models have been implemented in other software, such as Microsoft Excel.

What is the Current Level of Adoption of Metamodeling in HEOR?

Metamodels are widely used in fields such as engineering and environmental modeling, where high‑fidelity simulators are computationally expensive. In HEOR, however, applications remain relatively limited and are still more common in academic work than in routine health technology assessment (HTA) submissions.

Nevertheless, there are impactful examples demonstrating its value. The Sheffield Accelerated Value of Information (SAVI) tool,2 for example, uses metamodeling to enable rapid estimation of value of information analyses from probabilistic analysis output.

Uptake of metamodeling may increase further as cost‑effectiveness models continue to become more complex and as computationally demanding analyses are increasingly part of HTA guidelines. This includes probabilistic analyses and value of information analyses that are now mandatory in some jurisdictions.

An important area of further development is developing clearer guidance on validation standards, with emphasis on decision‑relevant accuracy, also to support the assessments of metamodels by others.

What Are Some Key Readings for Further Reading?

For readers interested in a detailed methodological overview, the structured introduction by Degeling et al provides a starting point.1 An applied example by Koffijberg et al illustrates the use of metamodeling to facilitate optimization of colorectal cancer screening strategies.3

Did you enjoy reading this article? Or do you have suggestions to improve the section or methods we should cover in future editions? Please share your feedback with the Value & Outcomes Spotlight Editorial Office.

References

- Degeling K, IJzerman MJ, Lavieri MS, Strong M, Koffijberg H. Introduction to metamodeling for reducing computational burden of advanced analyses with health economic models: a structured overview of metamodeling methods in a 6-step application process. Med Decis Making. 2020;40(3):348-363. doi: 10.1177/0272989X20912233

- Strong M, Oakley JE, Brennan A. Estimating multiparameter partial expected value of perfect information from a probabilistic sensitivity analysis sample: a nonparametric regression approach. Med Decis Making. 2014;34(3):311-326. doi: 10.1177/0272989X13505910

- Koffijberg H, Degeling K, IJzerman MJ, Coupé VMH, Greuter MJE. Using metamodeling to identify the optimal strategy for colorectal cancer screening. Value Health. 2021;24(2):206-215. doi: 10.1016/j.jval.2020.08.2099